Much has been written about the problems of hate speech on social media and the concern that high profile mainstream platforms like Twitter, Facebook and Instagram have been hijacked by far right haters pushing an extremist agenda.

Twitter in particular has acknowledged there is a problem relating to the use of its platform for organised right wing troll gangs to form alliances and spread propaganda to confuse and disrupt the genuine messages put out by credible news sources.

Twitter claims to take hate seriously and states in its Terms of Service that “We prohibit behaviour that crosses the line into abuse.” It recently updated the ToS to include a Hateful Conduct Policy where it makes the claim “You may not promote violence against or directly attack or threaten other people on the basis of race, ethnicity, national origin, sexual orientation, gender, gender identity, religious affiliation, age, disability or disease.” It specifically states they do not tolerate “violent threats, wishes for the physical harm, death or disease of individuals or groups.”

The problems with hate speech on Twitter are not a recent issue. Back in 2015, a full year before the EU referendum and long before anybody had seriously considered that Donald Trump might be successful in his bid for USA President, Twitter were battling threats of violence in the form of tweets. In April 2015, in a bid to clamp down on online hate, Twitter announced that not just threats of violence but indirect threats of violence would be taken seriously, with consequences for the offending user.

Later in 2015 Twitter came under fire for not doing enough to tackle abusive speech on their platform and publicly announced: “As always, we embrace and encourage diverse opinions and beliefs, but we will continue to take action on accounts that cross the line into abuse.”

Despite calls from activists and anti-hate groups to tackle the problems at source – i.e. the individual users posting threats and hate speech, Twitter’s answer was to introduce a mute function. This gave users a greater control over the messages to which they were exposed but did nothing to remove the hate itself from the social media platform. To all intents and purposes, this attempt to plaster over the cracks was a win for haters.

In May 2016 several social media providers, including Twitter, agreed to adhere to a new online code of conduct established by the EU. The basic principle was that hate speech should be removed within a timescale of less than 24 hours from the company being notified of its presence. Twitter’s then Head of Policy made the commitment that “Hateful conduct has no place on Twitter and we will continue to tackle this issue head on alongside our partners in industry and civil society. We remain committed to letting the tweets flow. However, there is a clear distinction between freedom of expression and conduct that incites violence and hate.”

In February 2017 Twitter announced further new security measures which would stop habitually abusive users from making new accounts, once suspended, to continue with their abuse.

Twitter’s dirty laundry received another public airing in August 2017 when artist Shahak Shapira stencilled some the worst tweets on the ground outside Twitter HQ Twitter’s reply to this was “Over the past six months we’ve introduced a host of new tools and features to improve Twitter for everyone.”

The Twitter abuse laws tightened again in October 2017 with Twitter’s CEO Jack Dorsey finally conceding that the measures so far put in place had still not addressed the problem of hate on Twitter. This was timed (rather conveniently) with Twitter’s much criticised decision to suspend actress Rose McGowan who had used the platform to speak publicly about the sexual abuse she had received. (Ms McGowan has since been reinstated by Twitter).

In November this year Twitter suspended its verification system (the infamous blue tick) after a public outcry that it had endorsed notorious Neo Nazi Jason Kessler . Twitter acknowledged there was confusion between verification and validation and agreed to review the blue ticks of those currently enjoying enhanced privileges on the site. Several high profile far right figures, including convicted fraudster Stephen Yaxley-Lennon and Richard Spencer were demoted to standard accounts.

The next big date in the Twitter v Hate wars is December 18. Twitter have given an official notice that from this date it will suspend accounts who use hate symbols on the site or who affiliate with violent hate groups either on and off the site.

I would like to say I feel confident about Twitter’s intentions but as co-founder of our anti hate group, frankly I have little faith that Twitter will put the effort into achieving its objective. So far, despite the hype, the publicity and the promises, I have seen scant evidence of genuine committment from Twitter with regard to eradicating hate from their platform.

I received this public threat to rape and murder me this week. (Remember Twitter saying wishes for physical harm broke their ToS?) It has been reported to my knowledge by no less than 200 people. The account has not been suspended. The account is still tweeting abuse.

This account has had over fifty incarnations with every single one titled DieJewDie. Twitter are either unwilling or unable to stop it. It continues to post Anti-Semitic hate.

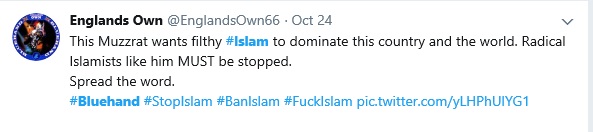

This hate group has numerous members posting Islamophobia. Many of these accounts to date have still not been suspended.

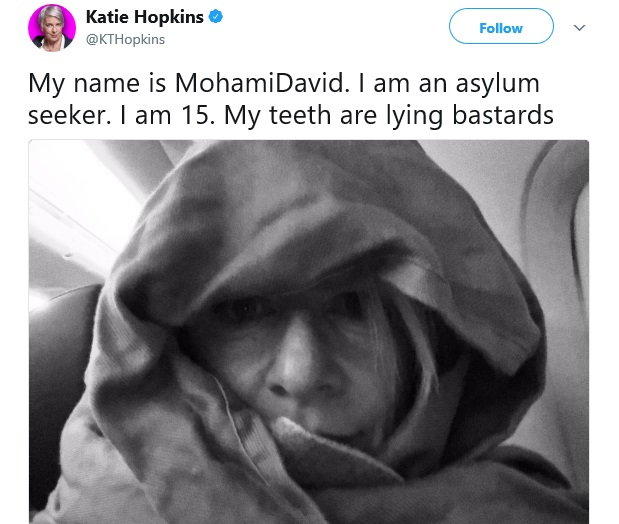

Katie Hopkins still has her verified status despite posting this:

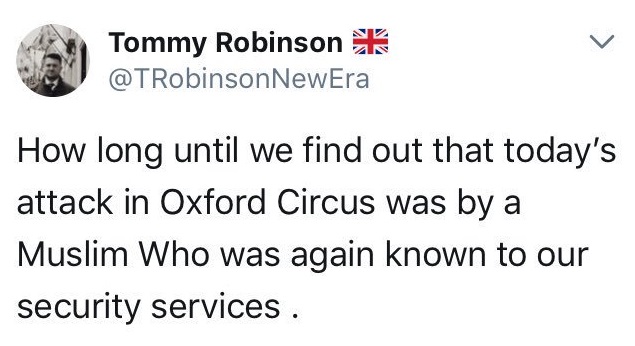

convicted fraudster Stephen Yaxley-Lennon is still allowed to spread hate to his 387,000 followers despite tweeting this in the aftermath of the high alert at Oxford Circus

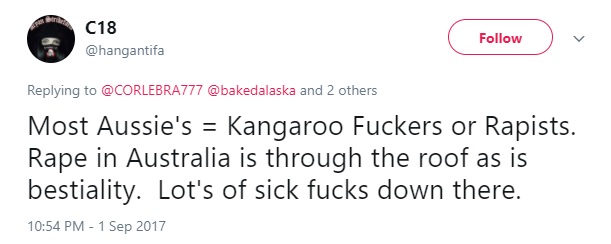

We have hundreds and hundreds of tweets like the one below in our database, many of which have been reported by 100+ people. The take down rate is fewer than 10% of extremist hate tweets resulting in actual account suspension.

Twitter are quick to promise but bloody slow to deliver. As for December 18th being the deadline for hate on their site? I’ll believe it when I see it @Jack.

Roanna Carleton Taylor

Roanna Carleton Taylor is one of the founder members of Resisting Hate. She is the author of many of our articles, and also writes occasionally for other media publications including Huff Post, Byline Times and Immigration News. Roanna loves German Shepherd Dogs and Oil Painting.